SSH Keys with Docker and TeamCity

September 19, 2015

Lately I’ve been configuring TeamCity to run builds inside of Docker containers. One of the classes of builds I’ve been configuring is nightly integration testing. These tests use Ansible to provision a variety of cloud resources including servers, storage backends and load balancers, and then install and configure our software and test it behaves as expected in real-world scenarios (automated cluster failover testing, for example). Due to the nature of these tests they need to be able to SSH in to the provisioned servers - which means they need SSH key access. TeamCity has this covered.

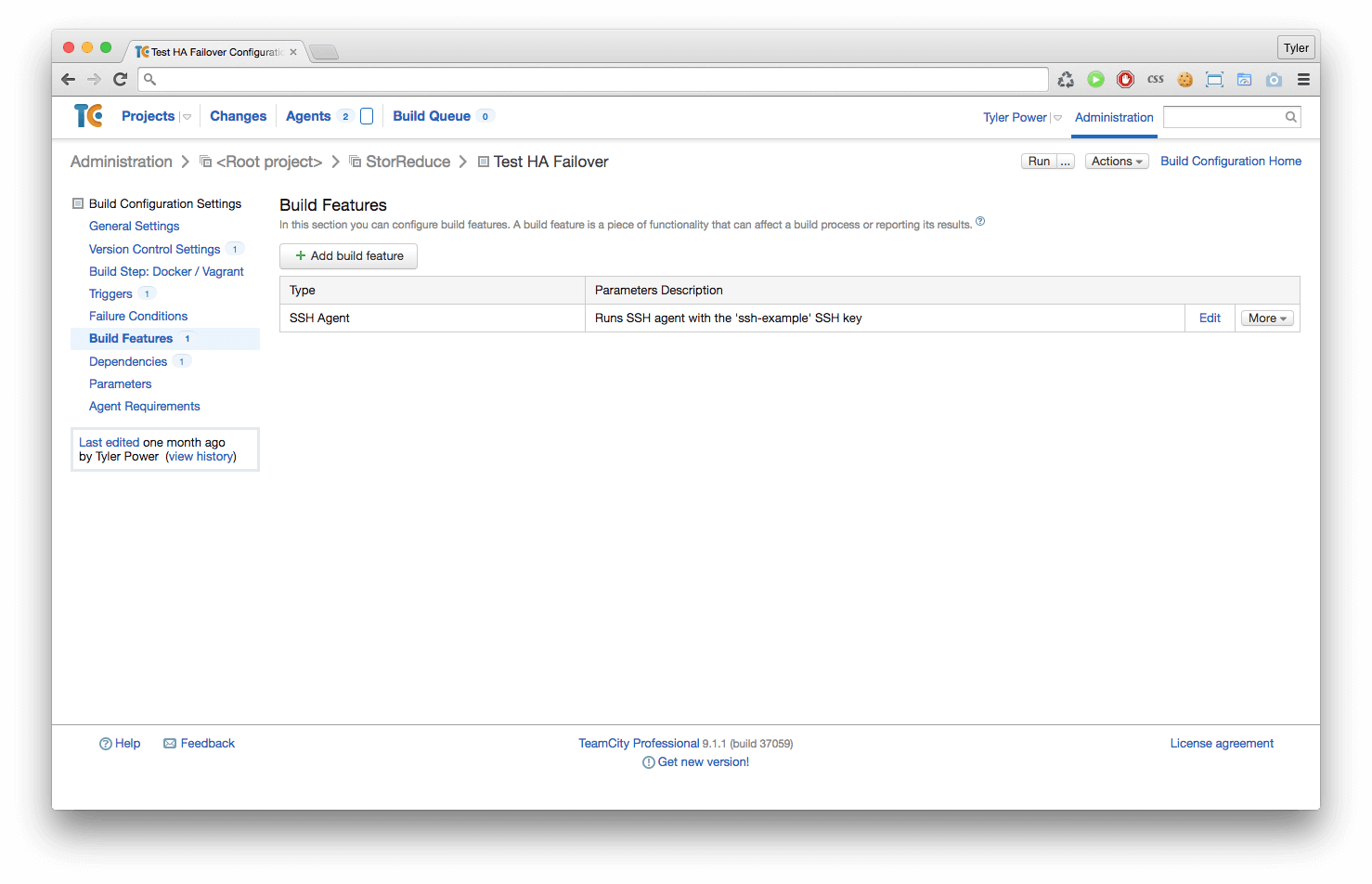

TeamCity has SSH key management built in - you simply upload your key to TeamCity via the web interface, and then on a per-build basis you can tell it to push the key to the build agent, and have it made available via the local SSH agent for the duration of the build. This is exactly what I needed, except out of the box it doesn’t work with the Docker plugin. Luckily for us the underlying mechanism TeamCity uses to load the key is the local SSH agent, so we just need to make sure the host’s SSH agent is available within the build’s Docker container.

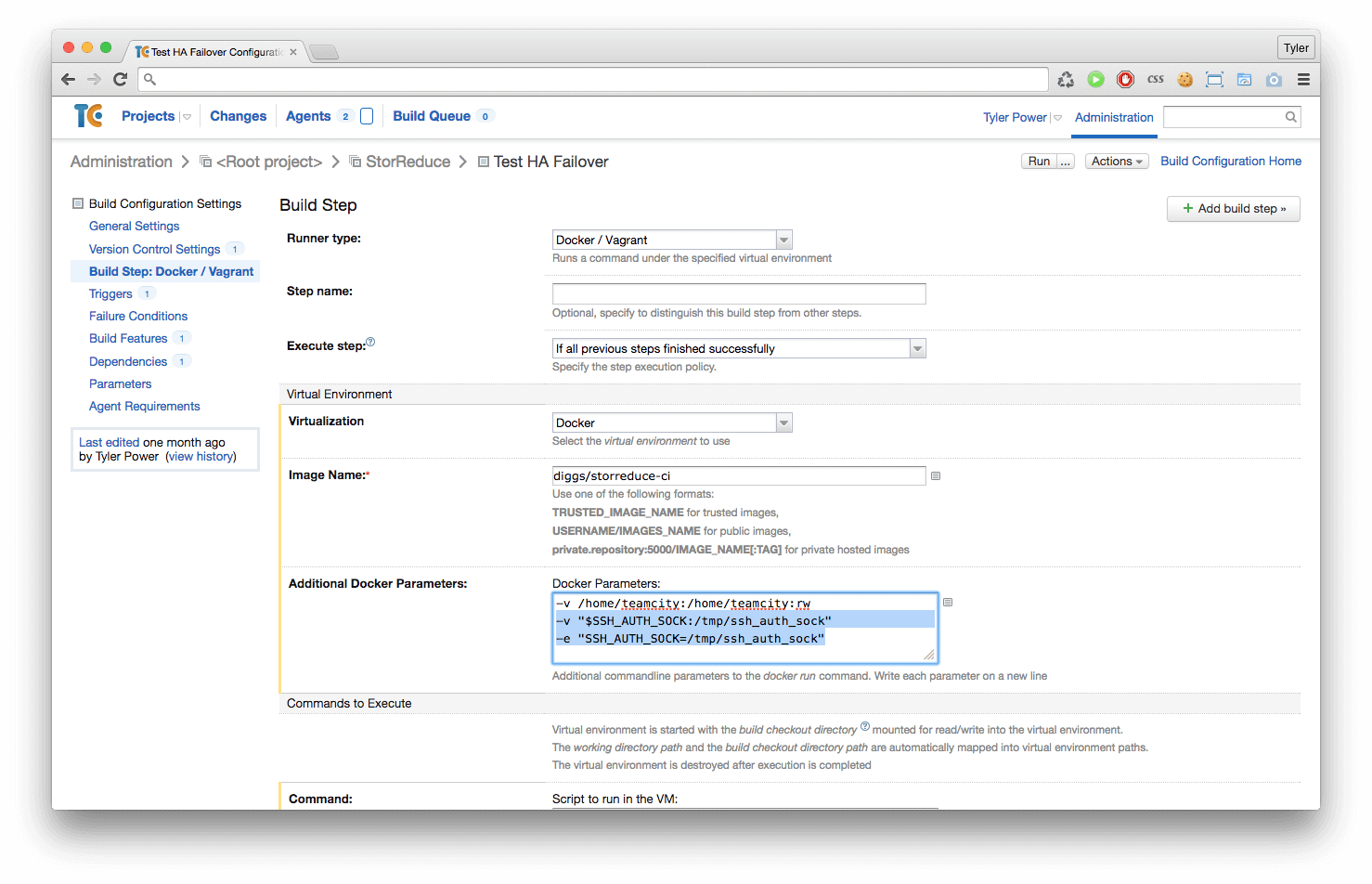

This turned out to be incredibly simple. The trick is to mount the local SSH agent

within the Docker container via the -v flag, then to expose the mounted SSH

agent within the Docker container via the SSH_AUTH_SOCK environment variable.

TeamCity Docker Step Config:

-v "$SSH_AUTH_SOCK:/tmp/ssh_auth_sock"

-e "SSH_AUTH_SOCK=/tmp/ssh_auth_sock"

After making this tiny configuration change you can then use the SSH Agent build feature just as you normally would, and your Dockerized builds will inherit the SSH key access!